Follow-Up Performance Measurements (Q4 2108)

Thanks to funding from the Open Technology Fund we have continued work on some performance measurements for various DNS Privacy implementations. We report our new results below.

Previous work

-

Our initial work on performance measurements can be found here: Initial Performance Measurements (Q1 2018). This work was presented at RIPE 76: Slides, Video. Key results from this work were:

- Setup: Used modified

dnsperfwith UDP/TCP/TLS on 2 bare metal machines - Comparisons of 4 nameservers for small number of clients (10s)

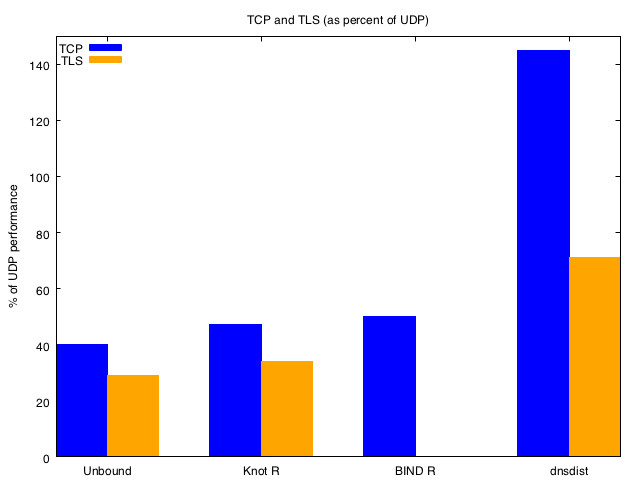

- Typically saw TCP was 40-50% of UDP throughput (except

dnsdist, which uses a different threading model and performed better) - TLS was 30-40% of UDP throughput

- Typically saw TCP was 40-50% of UDP throughput (except

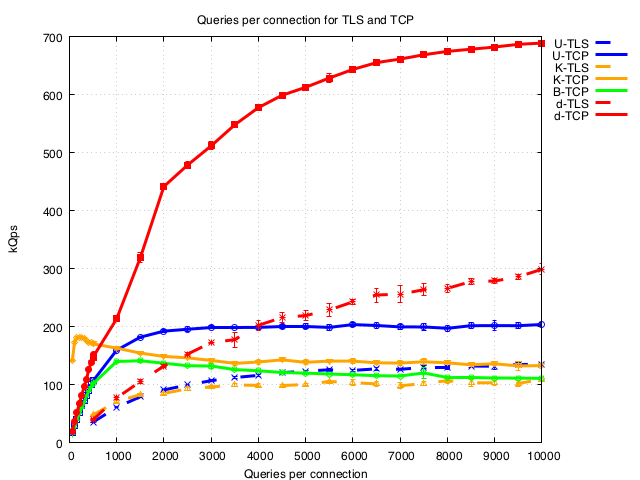

- Varying the number of queries per connection (including low numbers) shows amortisation of setup occurs between 100 or ~2000 q/conn depending on the nameserver

- Setup: Used modified

-

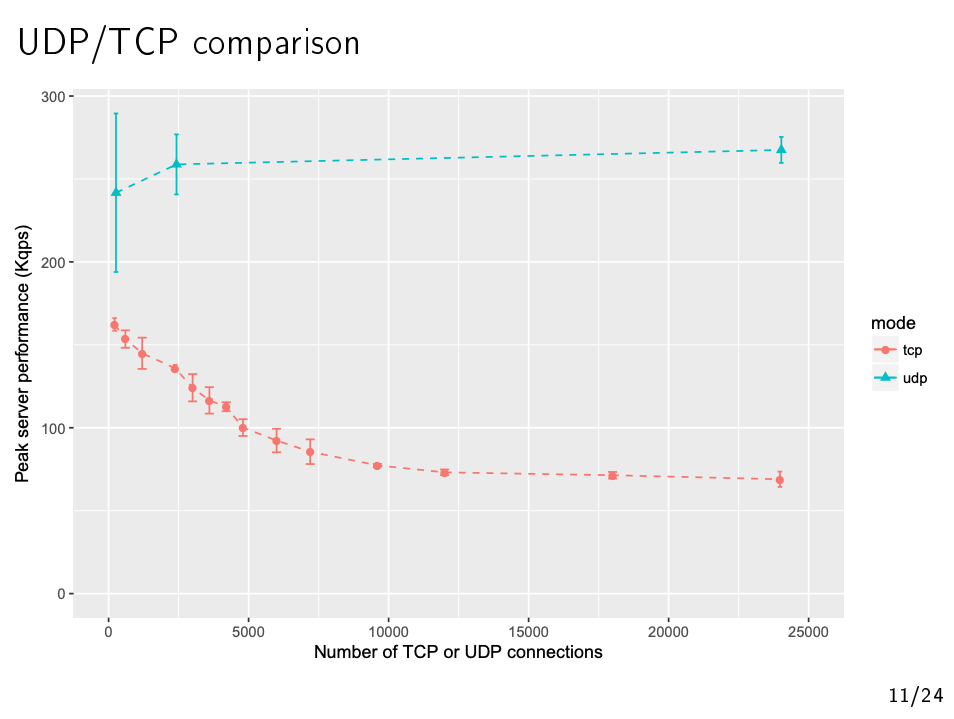

We also note some complimentary related work: High-Performance DNS over TCP by Baptiste Jonglez, University Grenoble Alpes who also presented at RIPE 76: Slides, Video. Key results from this work were:

- Setup: Measured just

Unboundnameserver but used thousands of cloud VM clients. Measured just UDP/TCP (not TLS) and used a simple tcpscaler tool where TCP connections were never closed - Determined that for

UnboundTCP throughput for 25,000 clients was 25% of the UDP throughput and this decreased as the number of clients increased (see graph below) - Eventually scaled to 6.5 million clients as a POC

- Setup: Measured just

Goals of this work

We had always intended to extend our work to tens of thousands of clients and were particularly intrigued by Baptiste’s results which showed a poorer TCP through put than we expected and an unexpected drop in throughput between 10 and 10,000 clients. However when we looked at the details of his work it became clear that the above graph was for the case where Unbound was restricted to a single thread (our work above had used 4 threads).

So we wanted to explore the performance across the *nameservers for 24,000 clients where the nameserver could effectively use all the resources of our test machine. *

This work used the same lab set up as the previous work, except that we used up to 24 separate dnsperf instances running in parallel, each spawning 1000 TCP connections to reach a total of 24,000 clients. In each test 5000 queries per connection were used to ensure the connections cycled during the test but that the handshake setup had been fully amortised.

The following work has also been presented at RIPE 77: Slides, Video

Results - TCP vs UDP

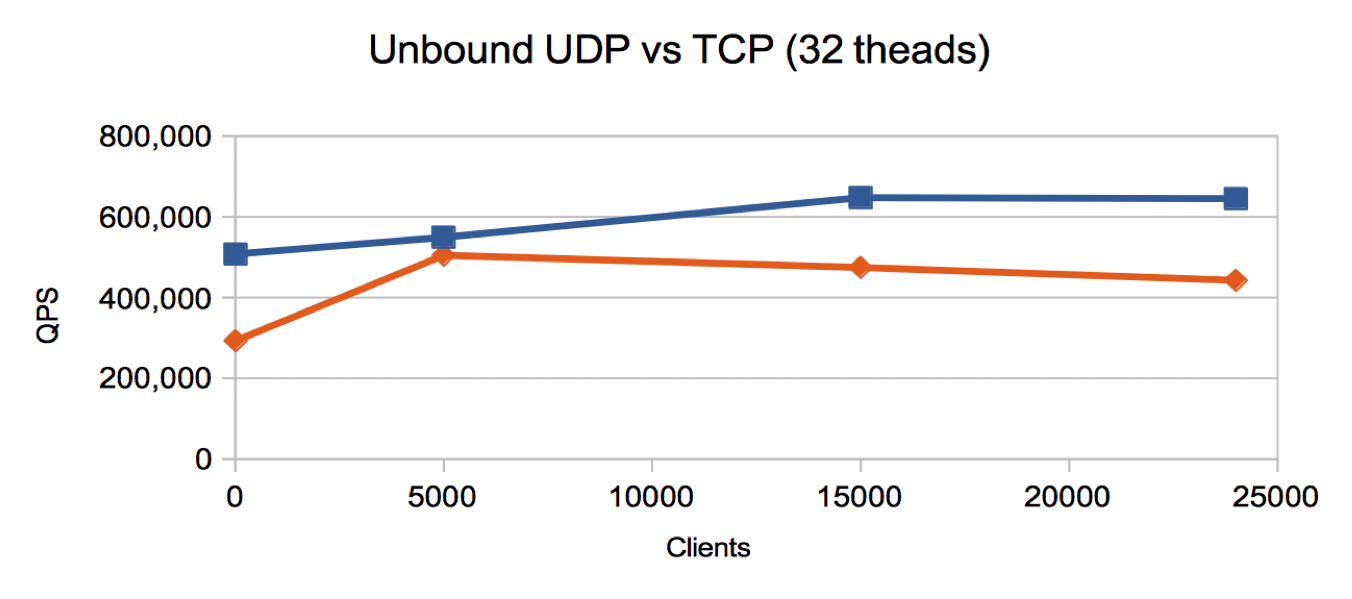

In the following graphs blue lines are UDP, reds lines are TCP

Unbound measurements

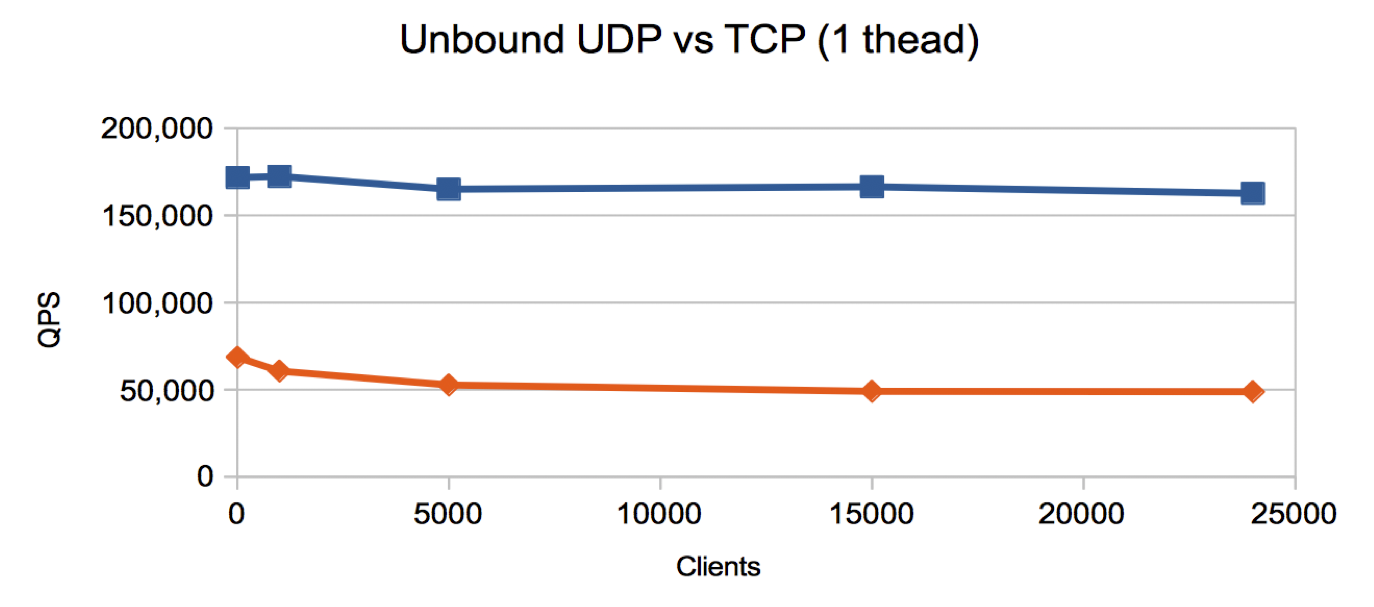

Our findings are shown below for measuring Unbound using 1 thread and

then 32 threads (on a 16 core machine with hyper threading enabled).

For a single thread we see a similar profile the above graph

from High-Performance DNS over

TCP by Baptiste

Jonglez, however:

For a single thread we see a similar profile the above graph

from High-Performance DNS over

TCP by Baptiste

Jonglez, however:

- with a slightly lower throughput and less dramatic decline as the number of clients increases

- and we observe that TCP is 32% of UDP throughput for 24,000 clients.

- For 32 threads we see a quite different profile: a significantly higher throughput and TCP is 67% of UDP throughput for 24,000 clients.

- We also note is is almost the same for 5000 clients and suspect the decrease above that might be related to IRQ handling.

Conclusion: When Unbound has sufficient resources the performance throughput of TCP is 2/3 of that of UDP even at large numbers of clients.

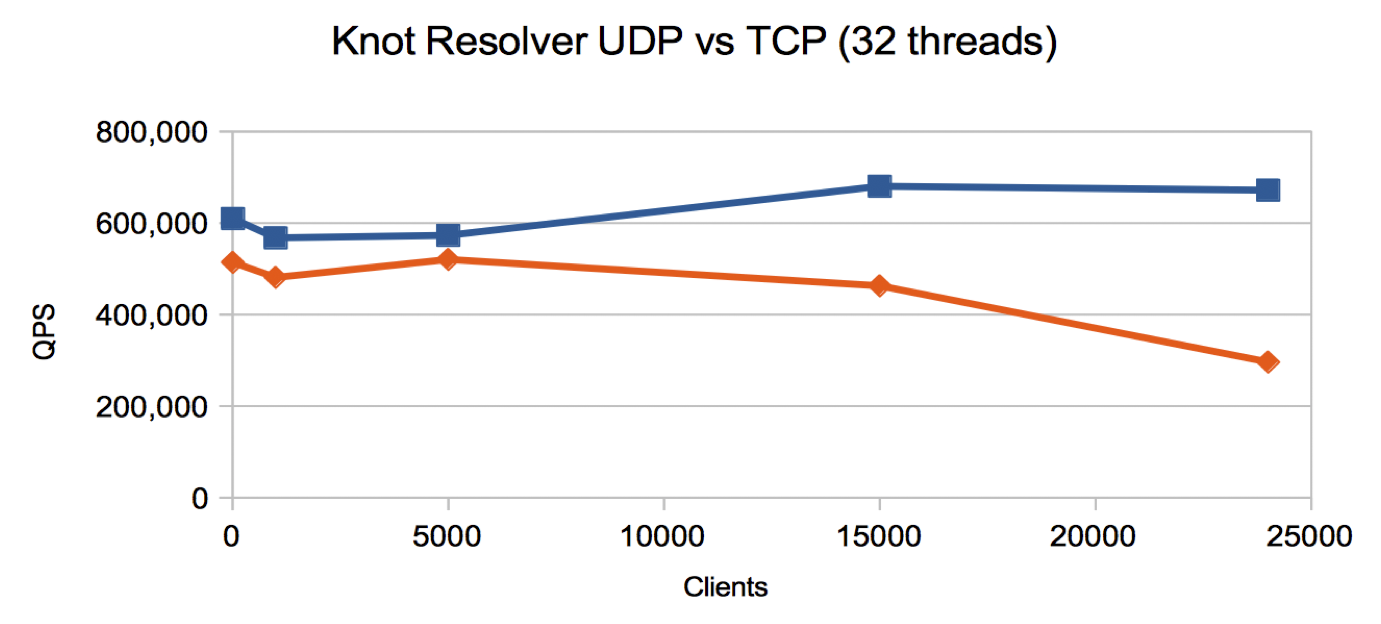

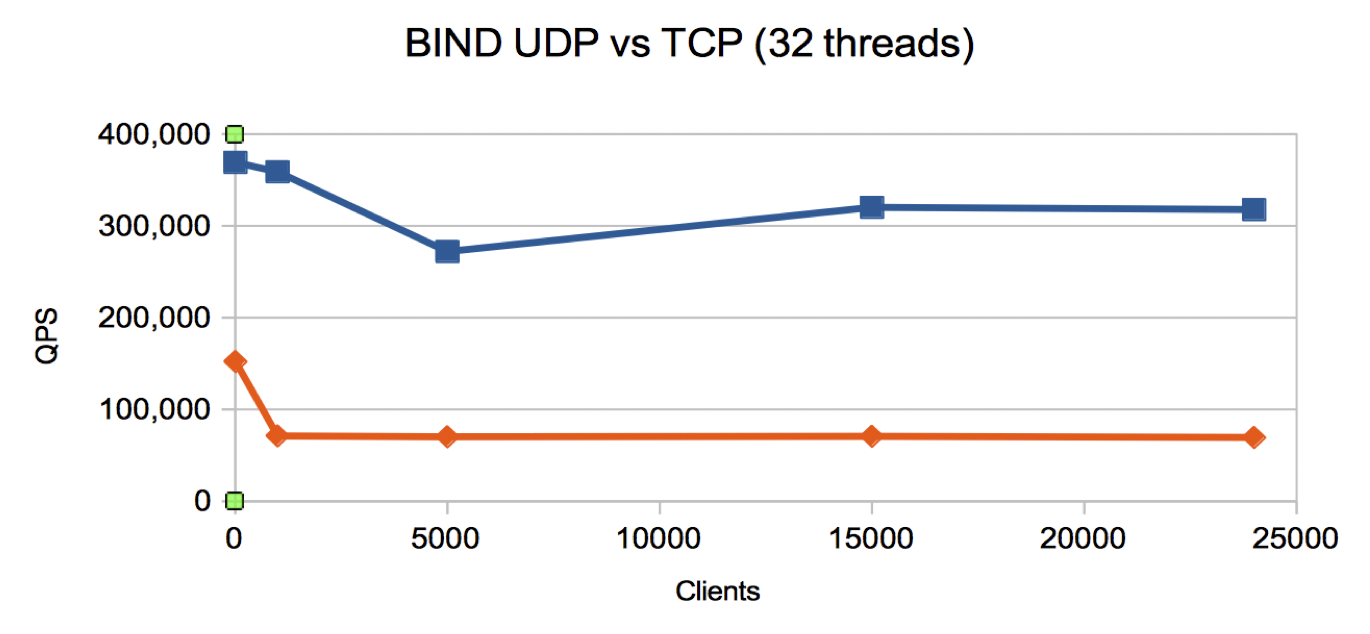

Knot Resolver and BIND

We repeated the test runs for both Knot Resolver and BIND for many clients - the results are below.

-

Knot Resolver

- TCP is 50% of UDP at 24,000 clients

- Similar profile and throughput to

Unbound

-

BIND

- TCP is 25% of UDP at 24,000 clients

- Lower UDP throughput than the other name servers and a strange flat profile for TCP

dnsdist

We were unable to scale the number of simultaneous clients that

dnsdist would accept over TCP past 1000. The reason for this, we have

confirmed with PowerDNS, is due to the fundamental architecture of

dnsdist when dealing with TCP connections - the number of simultaneous

connections that can be processed is entirely dependent on the size of

the thread pool. Increasing this takes system resources, and is not

practicable beyond a point dependent on the server hardware.

Conclusion:

We have had to conclude that dnsdist is currently optimised for only a

moderate number of client connections. We believe recent work in dnsdist

is changing this model for the DoH implementation.

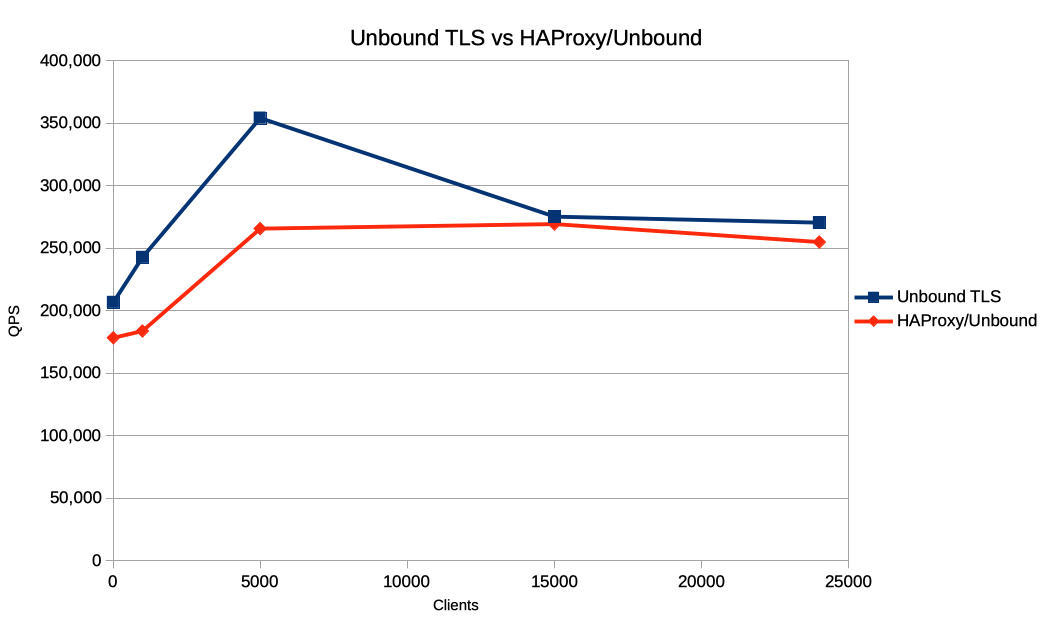

Results - TLS proxy vs native TLS

HA-Proxy fronting Unbound

Finally, we also investigated the performance of native nameserver TLS support vs a TLS-TCP proxy/TCP nameserver setup (no ’traditional’ TLS proxy that we know of can receive DNS-over-TLS and forward over UDP).

To do this we configured Unbound to use 32 threads, and configured

HAProxy to use 32 single-threaded processes (the recommended

configuration for HAProxy in the TLS-TCP proxy use case). Note that with

fewer threads available to HA proxy it appeared that that the

encryption/decryption process was the main performance bottleneck.

- Performance of the proxy/nameserver combination is slightly below that of the native nameserver - unsurprising given that the proxy introduces an extra connection into the end to end processing chain

- However the overhead of using a proxy is at worst in the region of 20% of peak throughput.

- Adding DNS-over-TLS service to an existing nameserver when native nameserver TLS support is not available is, potentially, a realistic performance option for operators.

Conclusion:

These results show that use of a TLS-TCP proxy versus native nameserver

TLS support performs as might be expected.

Limitations of these measurements

These measurements use a modified version of dnsperf, a widely used

DNS UDP benchmarking tool. UDP benchmarking is rather simplistic and is

only concerned with throughput and latency and has the luxury of

treating the client population as heterogeneous.

Session based benchmarking can’t do this:

- Consider individual client experience (throughput & latency)

- Clients behave differently: must simulate client population with varying profiles (qps, idle timeouts)

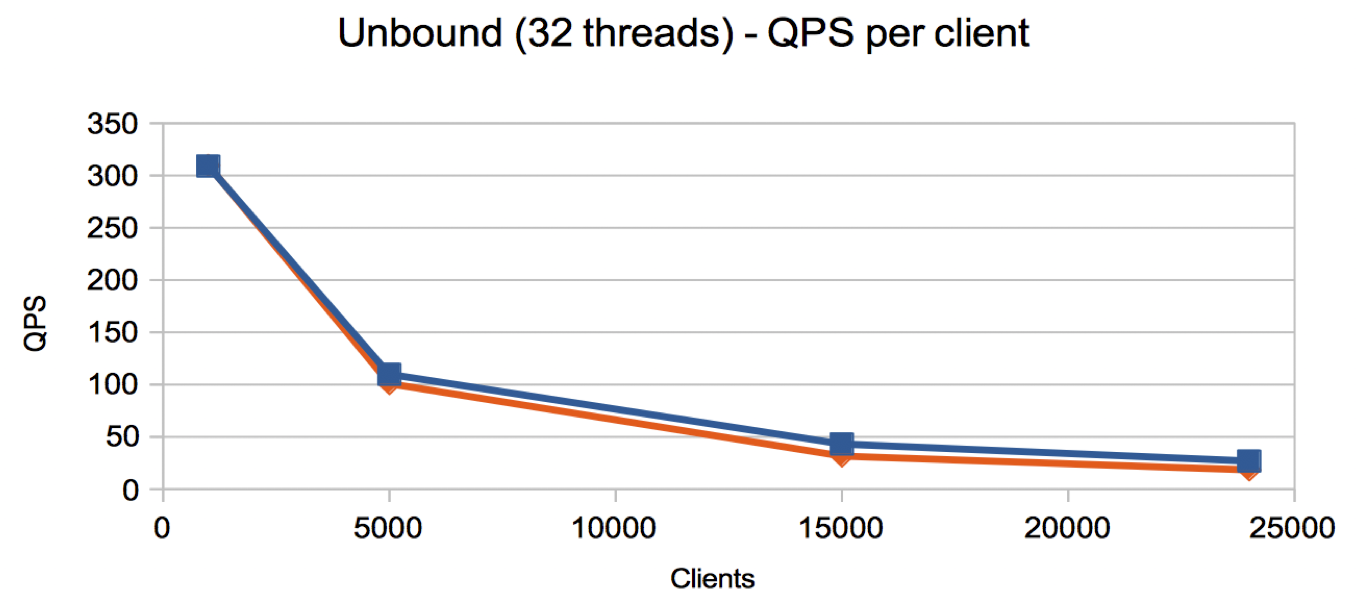

For example, taking our measurements of Unbound (32 threads) and converting the data to represent the average queries per second per client gives the following graph:

- With 24,000 clients, each client is making ~20 queries per second.

Real DNS traffic data

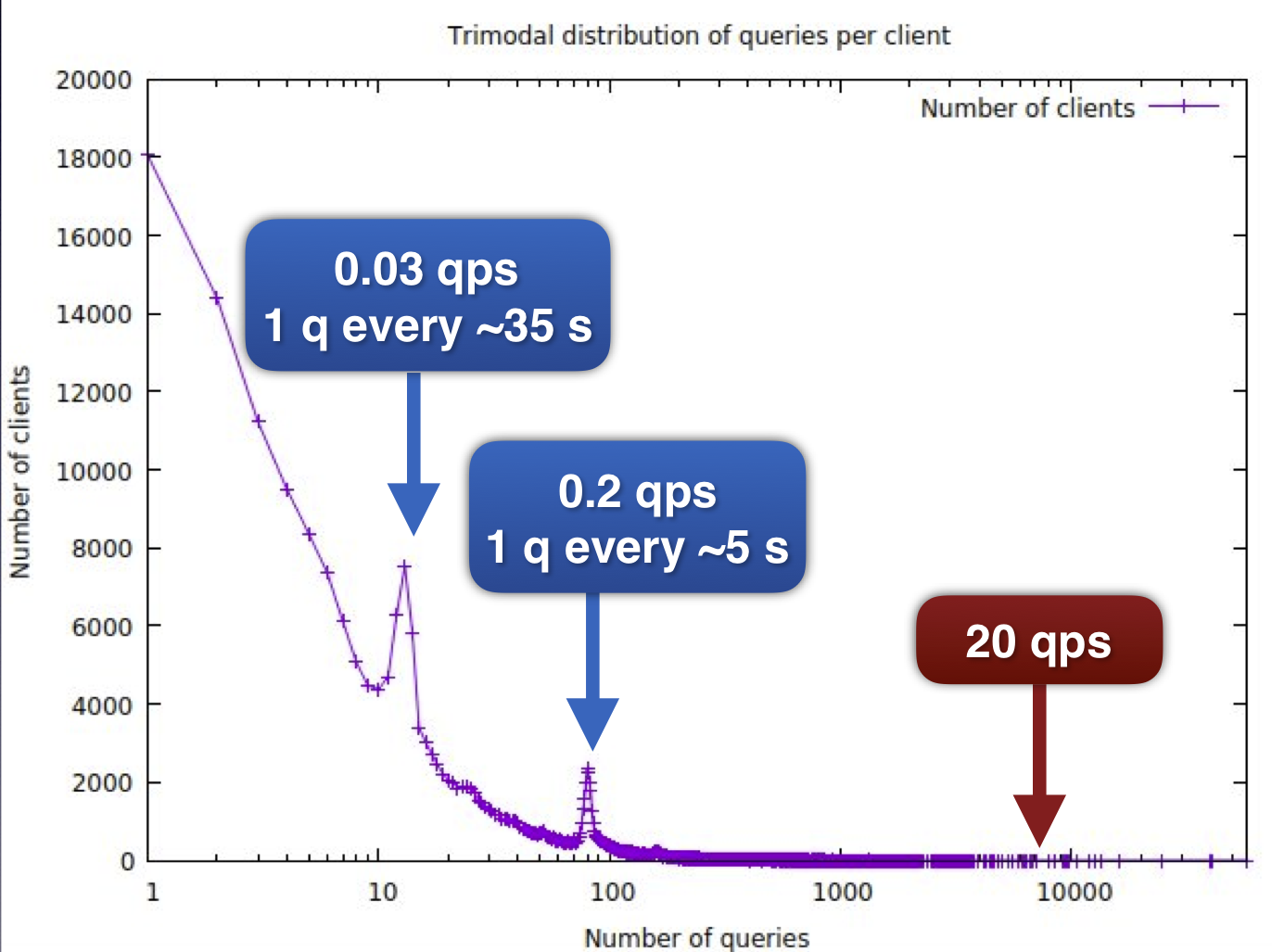

Very little real world data for DNS client populations is available that can describe the behaviour of individual clients. Some recently shared data (courtesy of Bert Hubert at PowerDNS) show how much variation there can be even in a small traffic sample. The following data was collected in in a 7 minute window observing traffic on a recursive resolver. We estimate the number of individual client (addresses) is in the region of 250k.

- It can be easily observed that only a handful of clients are reaching a query rate of 20 q per second, and these are likely to be either forwarding resolvers or many devices behind e.g. a NAT

- The distribution is smooth apart from 2 peaks, which correspond to 1 query of ~35s and 1 query ever ~5s.

- We see a significant number of clients performing only a single query during the measurement window.

It must be noted that this data is from a UDP client population and UDP clients use a different name serve selection algorithm to that specified for session based DNS (RFC7766). So there may be many reasons for the overall distribution including:

- Client resolver choice algorithm

- Service provider load balancing

- Client software performing periodic network probing

- Bursty traffic and device idle behaviour

- Routers with many devices

- Forwarding resolvers

We believe we need to gather real world (anonymised) data from operators of DNS Privacy servers to correctly understand the client profiles of session based DNS to aid with further benchmarking.

HTTP-like client population modelling?

We suggest that further useful work on DNS benchmarking for session based protocols (DoT, DoH or DoQ) would require a new approach. In addition to gathering real world data we believe a new benchmarking tool is required.

We have investigated a number of tools designed for HTTP benchmarking that are capable of generating traffic with different percentages of client behaviour - our report is here. However we did not find any that could be easily modified to become useful DNS benchmarking tools.

We suggest a new, hybrid tool will be required with a high DNS query throughput but with HTTP tester-like scripting capabilities to model a diverse client populate. We have sketched out some initial requirements for such a tool and are happy to hear feedback on this proposal.